Investigating human-robot interactions with TIAGo robot

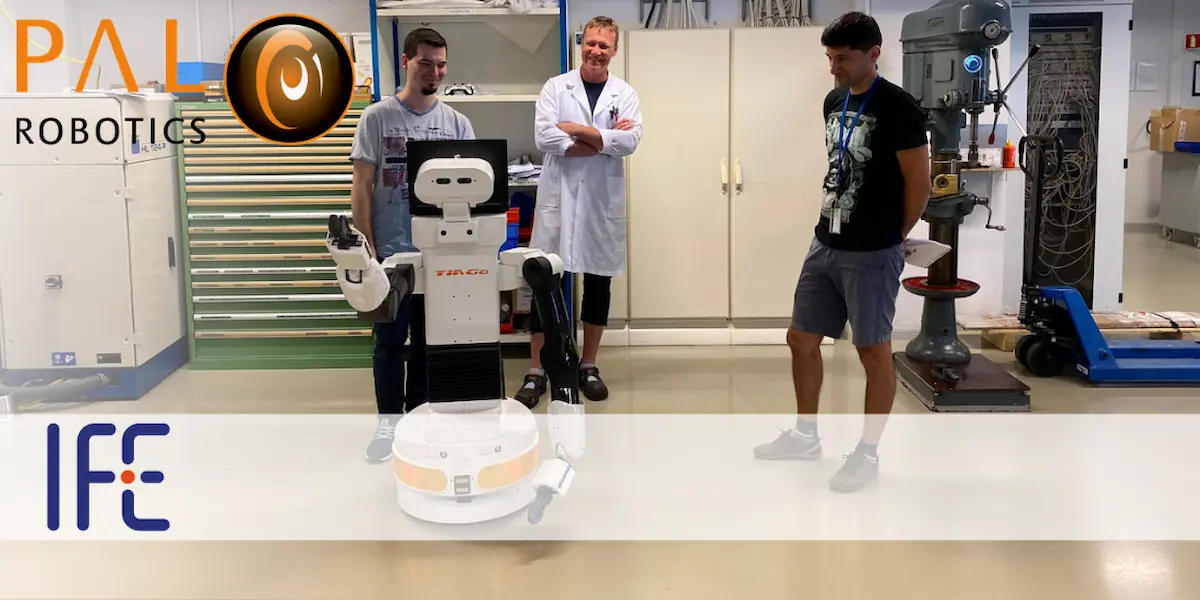

One of our TIAGo robots was recently welcomed as a new addition to the team at CASRR (Center for Advanced Sensor and Robotics Research) which forms part of the IFE (Institute for Energy Technology) in Norway.

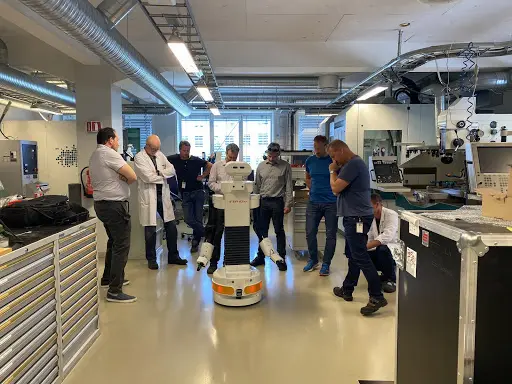

TIAGo was immediately put to use at CASRR in the Interactive Robots project, exploring the collaboration between robots and humans. The centre is particularly interested in studying the impact of the robot’s appearance (humanoid versus non-humanoid) on human perceptions and expectations of its capabilities.

CASRR brings together research on sensors, robotics and artificial intelligence to develop innovative solutions for smarter, safer and better work environments. In particular, their goal is to examine interaction, teaming and collaborative possibilities between humans and advanced technologies in highly digital and complex environments. We managed to interview Alexandra Fernandes, Ph.D Senior Scientist at IFE on theCASRR team’s plans for working with TIAGo.

Established in 2019, CASRR is a multidisciplinary group with a background in electronic and mechanical engineering, human factors and cognitive psychology, computer science and machine learning. The main purpose of CASRR is to develop advanced sensors and explore human-automation and human-robotics interactions from an applied research perspective.

Alexandra tells us, “our diversity enables us to take a holistic view and consider what the human needs to know about the machine, and what the machine needs to know about the human, to optimise the human-machine team.”

Background in robotics and interaction between humans and machines

With regards to her background in robotics, Alexandra told us, “My background education is psychology and I have been working on human-computer interaction since I completed my PhD in 2012. The challenges when teaming humans and technology have always fascinated me, and most of my work has focused on how to improve interaction between humans and machines attending to the nature of the tasks at hand, their context, and the specificities and capabilities of both humans and machines. In recent years my work has naturally driven me to human-robot interaction topics. Of course, being a huge Asimov fan and loving sci-fi has helped in pursuing this path!”

Studying the impact of the robot’s appearance (humanoid versus non-humanoid) on human perceptions and expectations of its capabilities

In the Interactive Robots project IFE – Digital Systems is aiming to perform a study on human-robot interaction. Alexandra explained, “we are planning to explore scenarios of interaction, targeting the robot appearance (humanoid vs non-humanoid) as a main variable. We will explore how appearance impacts the participants’ attitudes, perceptions (where perceived safety is a relevant issue), and collaborative intentions, as well as expectations on robots’ capabilities and usefulness.”

The team are interested in both industrial and service contexts for application of the robots so this will also be a variable in the study. Alexandra went into further detail, “we are currently defining the protocols for data collection, but I can say we are planning a study where participants will be presented with simple tasks that will cover scenarios with humanoid and non-humanoid robots, and where the presence of the robot will be manipulated (imagined versus real presence of the robots).”

Projects with other institutions on nuclear decommissioning and cybersecurity

IFE Digital Systems has multiple ongoing projects on robotics applications and in most ongoing projects they are working with national and international consortia. “The Humans and Automation Department has projects on human-robot interaction within the health sector (hospitals and home care services) such as the Norwegian Research Council project “Human Interactive Robotics” (HIRo and the Viken Regional Research Funding project “Safe Affordable Reliable Avatar for Homecare, controlled from a Response Center” (SARAH),” Alexandra told us when explaining partnerships and collaborations.

Alexandra continued, “there are also ongoing projects on robotics for nuclear decommissioning led by the Virtual and Augmented Reality Department (for example the RoboDecom project) and other colleagues are exploring cybersecurity topics in social robots. We are involved in several new applications both within Norway and Internationally and we are always open to discussing new opportunities with other partners.”

TIAGo, its features and flexibility as a research platform

In response to why the team chose TIAGo as a research platform and the plans for the robot, Alexandra said, “TIAGo is the first humanoid robotic platform we acquired. It is of course exhilarating for the research team and we are currently brainstorming several research possibilities for the near future where we can use TIAGo to explore aspects of HRI. We have been testing and implementing demonstrations with the robot, but haven’t moved on to empirical data collections where participants can directly collaborate with TIAGo. Our mobile manipulator robot arrived a couple of months ago, and we are currently exploring its basic functionalities to define the next steps towards our research needs.”

In terms of choosing TIAGo for their research, Alexandra told us, “the reason we chose TIAGo lies essentially with the flexibility the robot provides. We are a multidisciplinary team with different interests and backgrounds and we needed a robot that could be easily adapted to several lines of research, not only for human interaction, but also artificial intelligence, automation, and sensing capabilities. Likewise, we needed a platform that could be useful in both industrial contexts (our traditional applied research arena) but also for services and home assistance contexts (more recent application fields we are exploring).”

Aside from their work with the mobile manipulator robot, the team has an established partnership with Halodi Robotics, using their EVE humanoid robot within current projects. They also have other equipment at IFE Digital Systems, being used in different projects such as the DOBOT’s Magician Q3, Universal Robots’ arms UR5 and UR16, the Clearpath Robotics JACKAL platform, and No Isolation’s AV1 robot.

Challenges in HRI (Human-robot interaction) and acceptance by humans

In relation to the challenges in robotics, and the HRI aspects, which are Alexandra’s field of work, she told us,” I believe the research community is starting to build a knowledge-base that will become the backbone of HRI as a discipline. There are several challenges we face today, but I will highlight two that I consider particularly noteworthy, one more closely linked with the research community, and the other with the applied context.

The first is a common challenge to all scientific disciplines and relates to replication of findings and their generalization – we need to be able to have predictable outcomes in HRI studies so we can map variables and evolve in the field. We have still to define the ground rules for HRI research regarding methods, tools, and procedures and are yet to find meaningful taxonomies that will allow comparisons between different studies.

The second challenge is closely linked to human-centred design aspects and requires that human, interaction design, and organizational factors are considered and integrated in the development process of robots from the early stages. This will improve their chances of success, raising the likelihood of acceptance, and usefulness, and certifying that the robots are adding value to whatever context, task, and system they are to become part of. From previous experiences with several technologies and in multiple contexts of application, it is clear that without this background work, finding strong “business cases” and ensuring market uptake can be problematic.”

How overall systems will evolve with the increasing presence of intelligent agents

Looking more closely at how systems will evolve with regards to new technologies, Alexandra explained, “our research is human-centred and we are interested in studying robotics topics, mostly as a means to understand how people can interact and collaborate with robots in several contexts (from workplaces to the private home space). We are also fascinated with how human roles and overall systems will evolve with the increasing presence of intelligent agents, robots, and other fringe technology applications.

As such, we see human-robot interaction field as an ideal circumstance to explore how people can team-up, use, benefit, and grow and improve their experiences in both formal and informal scenarios. Creating a positive interaction between humans and robots will involve a lot of AI exploration, a better comprehension of hybrid teams (humans-automation) and a lot of investment from technical, scientific, and social disciplines.”

The future of HRI including coding of human behavior in response to robots actions

When asked about the roadmap of HRI over the next five years, Alexandra told us, “I believe that, in more than one way, we are still in the very early stages of human-robot interaction research – and this is what makes it exciting. There is still a long way to go on defining methods and developing tools to explore, assess, and comprehend human-robot interaction. This is true for situations where we talk about “imagined interaction” as well as real direct interaction.

In the first case we can talk for example, about asking participants to fill in a questionnaire about their expectations and attitudes towards robots in general or based on a description/picture/video of a robot. But of course, in situations where we want to have a direct assessment of the interaction by having the participant and the robot physically in the same space the methodological aspects are particularly interesting.

There are several challenges, covering aspects such as the robot’s capabilities, the safety of the interaction, which aspects to measure (for instance verbal/non-verbal communication, distance from the robot, coding of human behaviour in response to robots actions) and how to measure them (observations, questionnaires, counting interactions, measuring physical distance, categorizing ways of communication, etc.)”

Finally, Alexandra added, “we are slowly but steadily evolving in the field. The interest in the HRI topic is exploding both in and out of the academic context which makes me hope that within five years we might be in a position to answer a lot more questions on how humans and robots can, should, want or have to interact with each other.”

We would like to thank Dr Alexandra Fernandes for taking the time to talk with us. If you would like to ask us more about TIAGo as a research platform, do not hesitate to get in touch with us. To know more about our robots and research, check out our blog!